Posted by Simon Long Aug 14, 2018

Introducing Datrium CloudShift

Hey guys, I have some exciting news for you. Today, we (Datrium), announced CloudShift. Below I’ve summarized some of the things that really excite me about CloudShift. However, before I begin, I think it would be a good idea to quickly go over how Datrium DVX handles Backups and Replication for those of you who might be new to this solution.

Datrium DVX Backup and Replication

As mentioned in my previous post; What is Datrium DVX?, DVX is a Primary storage solution that provides extremely fast storage performance to virtual machines and applications running on DVX Compute Nodes. This is achieved by caching all required data locally on SSD devices installed on the Compute Nodes. DVX then adds a layer of protection by replicating all write IO’s over to the DVX Data Node which acts as a mirror copy for all of the data living in the SSDs on the Compute Nodes.

The Datrium On-Prem DVX system provides a built-in backup mechanism for protecting and restoring data. Protection Groups can be used to group a set of workloads together. Protection schedules are then be applied to the Protection Group based on how regularly data snapshots should be taken and how long they should be kept for. These Snapshots can then be replicated either to another On-Prem DVX system or to a Cloud DVX instance running in AWS.

Hopefully, that gives you enough background for the next section.

Datrium CloudShift

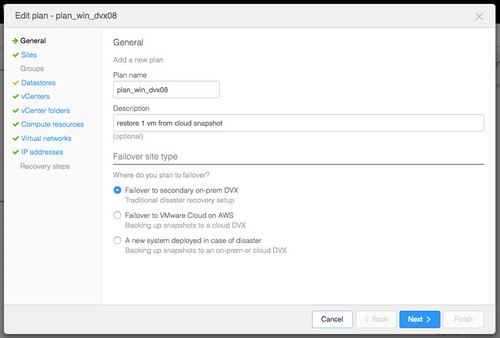

Datrium CloudShift is a SaaS-Based Disaster Recovery (DR) and Mobility orchestration solution, hosted in AWS, that can orchestrate a variety of DR scenarios;

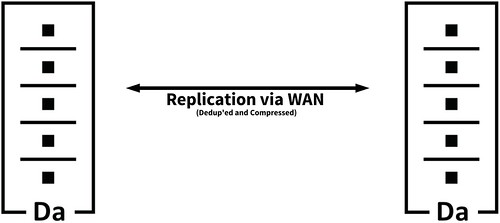

- On-Prem (primary) failover to On-Prem (secondary) using backups that are stored at the On-Prem (secondary) site.

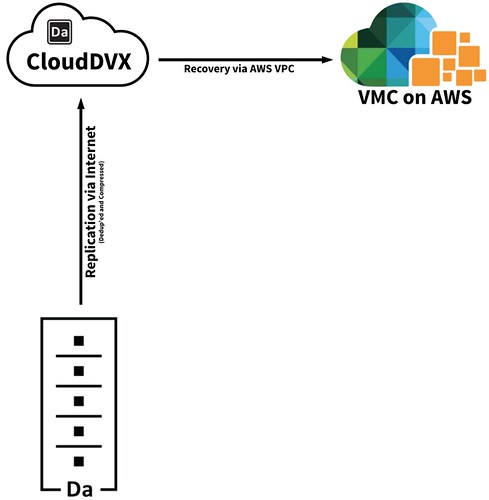

- On-Prem failover to Public Cloud using backups that are stored in the public cloud

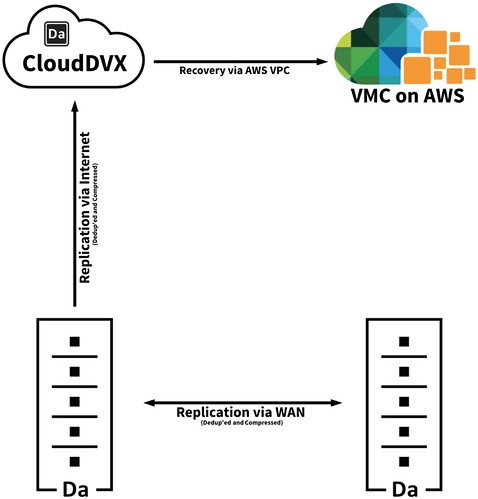

- On-Prem failover to On-Prem & Cloud using backups that are either stored at the On-Prem (secondary) site or in the public cloud

I feel this covers the majority of failover scenarios that our customers need to restore their business in the event of a disaster.

I imagine your next question is, “If this a SaaS-based solution, how do I get my data from one data center to another, or to the Cloud? Well, this is where Datrium DVX and Cloud DVX come into play.

Scenario 1 – On-Prem > On-Prem

Scenario 1 is most commonly used when a customer has two data centers, both of which have a Datrium DVX. The DVX is configured to continually replicate data using Elastic Replication from the primary over to the secondary data center (and possibly vice versa). In the event of a failure in one of the datacenters, CloudShift will orchestrate the failover of workloads from one data center to the other. Thanks to the replication technology in DVX, Recovery Point Objective (RPO) times can be less than 1 minute.

Scenario 2 – On-Prem > Cloud

This is the perfect solution for businesses that do not have the luxury of a second data center. An On-Prem Datrium DVX continually replicates data from the physical data center to the customers Cloud DVX instance running in AWS. In the event of a failure, CloudShift will orchestrate the hydration of protected workloads into the customer’s VMware Cloud (VMC) on AWS, Software-Defined Data Center (SDDC). If the customer isn’t an existing VMC on AWS customer, CloudShift will orchestrate the provisioning of a VMware SDDC in VMC on AWS and then the hydration of protected workloads into the newly provision SDDC. We call this a ‘Just-In-Time’ deployment as we are deploying everything on-demand rather than having resources pre-deployed and configured waiting for a disaster. Think of the money that could be saved…!

To add to the cost-benefit of ‘Just-In-Time’ DR infrastructure, one thing which could also be expensive is, failing back to your primary site once the datacenter has been restored. AWS charges for egress traffic (data coming out of AWS) so, the more data that you need to transfer from AWS to your data center, the higher the cost. Thanks to Datrium DVX Global Deduplication, only the delta changes that were made during the DR period will be copied back to your data center instead of ALL of your data.

One other cool thing about this solution is the fact that your operations/support teams do not need to learn how to use AWS. Any interaction/configuration required to deploy the VMC on AWS SDDC and restore your workloads is handled by CloudShift. Your operations teams will only get to see the vSphere SDDC which they can manage using the same tools they have been using to manage their On-Prem vSphere environments for many years.

Scenario 3 – On-Prem > On-Prem & Cloud

Often, many customers who have two data centers, do not have a second data center that has enough physical resources available for a full-site failover. Typically, only business-critical workloads are planned for failover/recovery. In this situation, scenario 1 can be used. However, often, a full data center disaster can take many weeks/months to fully recover from. Whilst business-critical workloads are ‘critical’ to the functionality of a business, often customers will, at some point, need access to those non-business-critical workloads. In this instance, there are two data centers, both of which have a Datrium DVX. The customer is also utilizing Cloud DVX running in AWS. The On-Prem DVX is configured to replicate data from the primary over to the secondary data center (and possibly vice versa) and also to their Cloud DVX instance. In the event of a failure in one of the datacenters, CloudShift will orchestrate the failover of business-critical workloads from one data center to another and, if required at a later stage, the recovery of non-business-critical workloads into a VMC on AWS SDDC.

Orchestration

When we talk about orchestration, what exactly do we mean? CloudShift customers will be able to create their own customized DR runbooks via a wizard in the vCenter Datrium User Interface (UI). Those DR runbooks are translated into a set of workflows that can enable the following:

- Workload protection

- Non-disruptive workflow testing

- Backup and replication to the public Cloud or another physical site

- Workflow execution

- Automatic creation of VMC on AWS, Software-Defined Data Center (SDDC)

- Workload restore to physical sites or to an existing, or newly created VMC on AWS SDDC

- Site mapping and Re-IP of virtual machines

- Continuous plan verification compliance checks

- Report generation

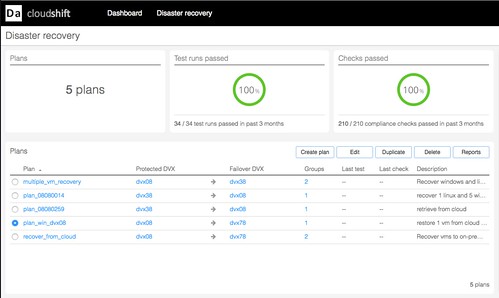

Simple Management

Another cool thing about DVX and CloudShift is that it’s all integrated into one UI and that UI is integrated into vCenter. Everything I’ve just written about can be managed straight from the vCenter Client (both Flash and HTML5). It’s also extremely easy to create and manage DR runbooks. As mentioned earlier, CloudShift is a SaaS-Based service running in AWS. There is no requirement for additional servers/components in your datacenters, nor is there software that needs to be managed or run within the virtual machines. It’s a service, Datrium manages it for you.

DVX is very simple to configure and manage, CloudShift is just the same. It’s almost too simple. When we demo our UI it’s almost anticlimactic because the user barely needs to do anything.

Below are some early Beta screenshots of the CloudShift functionality. Take a look, you’ll be shocked at how simple it is to use, but how powerful the outcome is.

Additional Information on Datrium CloudShift

- CloudShift Whitepaper

- CloudShift Blog Post

- CloudShift Product Page

- CloudShift Product Brief

- CloudShift in 60 Seconds (below)

- Storage Unpacked Podcast episode discussing CloudShift